pacman::p_load(plotly, DT, patchwork, crosstalk, ggstatsplot, readxl, performance, parameters, see, tidyverse)In-Class Exercise 04

Overview

This is a walk through of In-Class Exercise 4

Getting Started - Plotly and Statistical Visualization

Installing and Loading R Packages

Importing Data

This code chunk is to import the data from Exam_data.csv file to the Quarto/R page.

exam_data <- read_csv("data/Exam_data.csv")Working with visual variable: plot_ly() method

In the code chunk below, color argument is mapped to a qualitative visual variable (i.e. RACE).

plot_ly(data = exam_data,

x = ~ENGLISH,

y = ~MATHS,

color = ~RACE)Creating an interactive scatter plot: ggplotly() method

The code chunk below plots an interactive scatter plot by using ggplotly().

p <- ggplot(data=exam_data,

aes(x = MATHS,

y = ENGLISH)) +

geom_point(dotsize = 1) +

coord_cartesian(xlim=c(0,100),

ylim=c(0,100))

ggplotly(p)Two-sample mean test: ggbetweenstats()

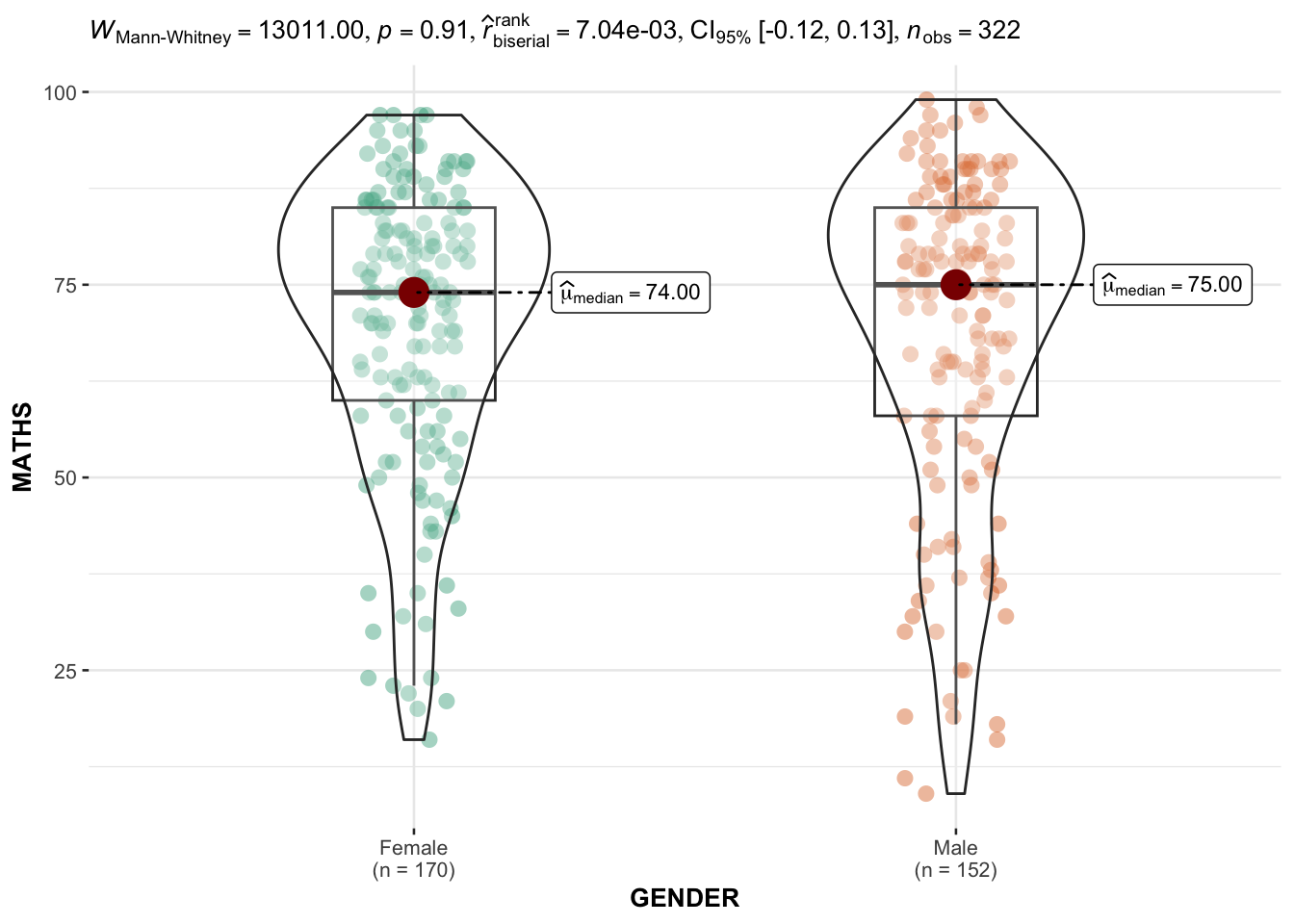

In the code chunk below, ggbetweenstats() is used to build a visual for two-sample mean test of Maths scores by gender.

ggbetweenstats(

data = exam_data,

x = GENDER,

y = MATHS,

type = "np",

messages = FALSE

)

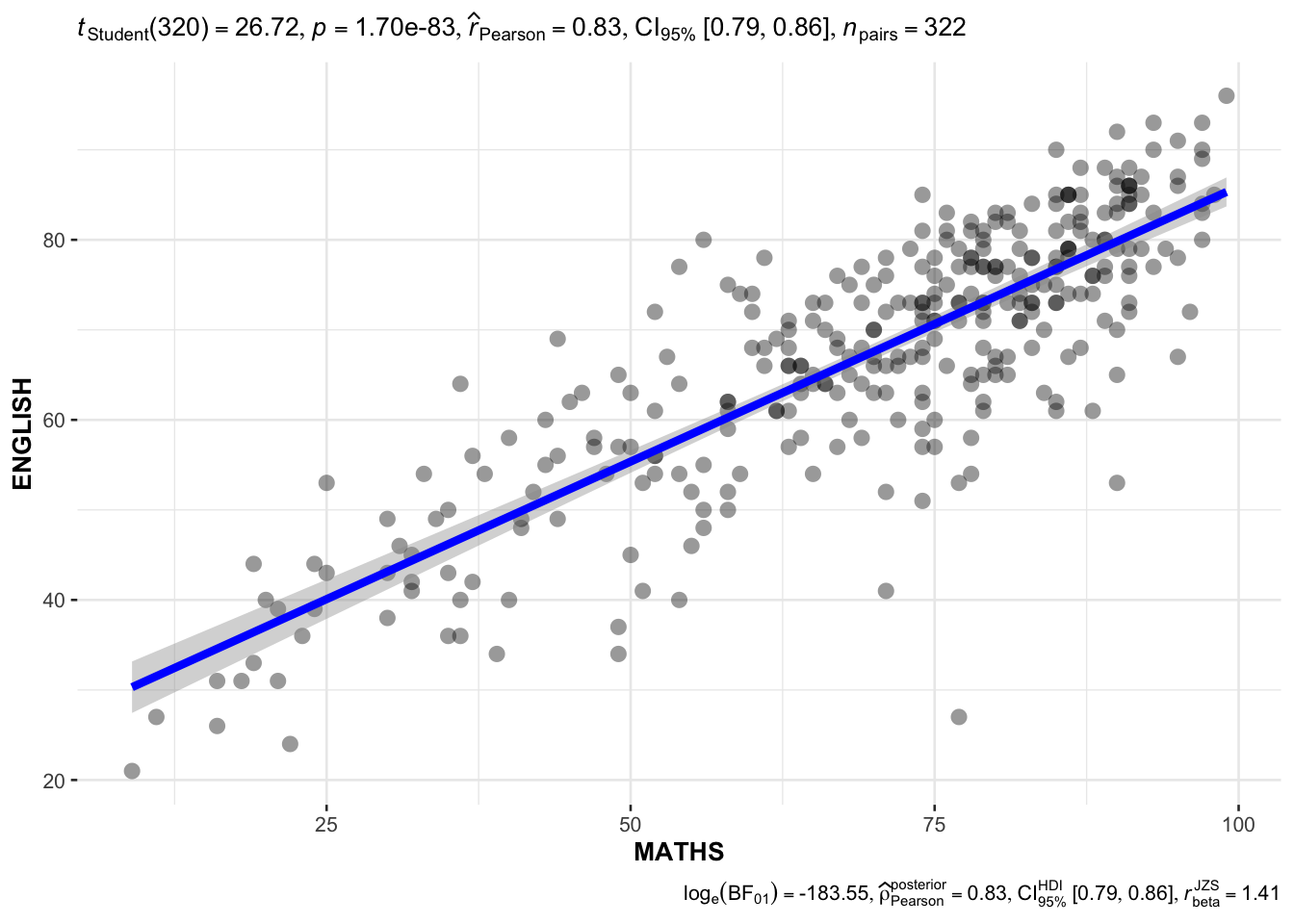

Significant Test of Correlation: ggscatterstats()

In the code chunk below, ggscatterstats() is used to build a visual for Significant Test of Correlation between Maths scores and English scores.

ggscatterstats(

data = exam_data,

x = MATHS,

y = ENGLISH,

marginal = FALSE,

)

Getting Started - Visualizing Models

Installing and Loading R Packages

pacman::p_load(plotly, DT, patchwork, crosstalk, ggstatsplot, readxl, performance, parameters, see, tidyverse)Importing Data

In the code chunk below, read_xls() of readxl package is used to import the data worksheet of ToyotaCorolla.xls workbook into R.

car_resale <- read_xls("data/ToyotaCorolla.xls",

"data")

car_resale# A tibble: 1,436 × 38

Id Model Price Age_0…¹ Mfg_M…² Mfg_Y…³ KM Quart…⁴ Weight Guara…⁵

<dbl> <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 81 TOYOTA Cor… 18950 25 8 2002 20019 100 1180 3

2 1 TOYOTA Cor… 13500 23 10 2002 46986 210 1165 3

3 2 TOYOTA Cor… 13750 23 10 2002 72937 210 1165 3

4 3 TOYOTA Co… 13950 24 9 2002 41711 210 1165 3

5 4 TOYOTA Cor… 14950 26 7 2002 48000 210 1165 3

6 5 TOYOTA Cor… 13750 30 3 2002 38500 210 1170 3

7 6 TOYOTA Cor… 12950 32 1 2002 61000 210 1170 3

8 7 TOYOTA Co… 16900 27 6 2002 94612 210 1245 3

9 8 TOYOTA Cor… 18600 30 3 2002 75889 210 1245 3

10 44 TOYOTA Cor… 16950 27 6 2002 110404 234 1255 3

# … with 1,426 more rows, 28 more variables: HP_Bin <chr>, CC_bin <chr>,

# Doors <dbl>, Gears <dbl>, Cylinders <dbl>, Fuel_Type <chr>, Color <chr>,

# Met_Color <dbl>, Automatic <dbl>, Mfr_Guarantee <dbl>,

# BOVAG_Guarantee <dbl>, ABS <dbl>, Airbag_1 <dbl>, Airbag_2 <dbl>,

# Airco <dbl>, Automatic_airco <dbl>, Boardcomputer <dbl>, CD_Player <dbl>,

# Central_Lock <dbl>, Powered_Windows <dbl>, Power_Steering <dbl>,

# Radio <dbl>, Mistlamps <dbl>, Sport_Model <dbl>, Backseat_Divider <dbl>, …Multiple Regression Model using lm()

The code chunk below is used to calibrate a multiple linear regression model by using lm() of Base Stats of R.

model <- lm(Price ~ Age_08_04 + Mfg_Year + KM +

Weight + Guarantee_Period, data = car_resale)

model

Call:

lm(formula = Price ~ Age_08_04 + Mfg_Year + KM + Weight + Guarantee_Period,

data = car_resale)

Coefficients:

(Intercept) Age_08_04 Mfg_Year KM

-2.637e+06 -1.409e+01 1.315e+03 -2.323e-02

Weight Guarantee_Period

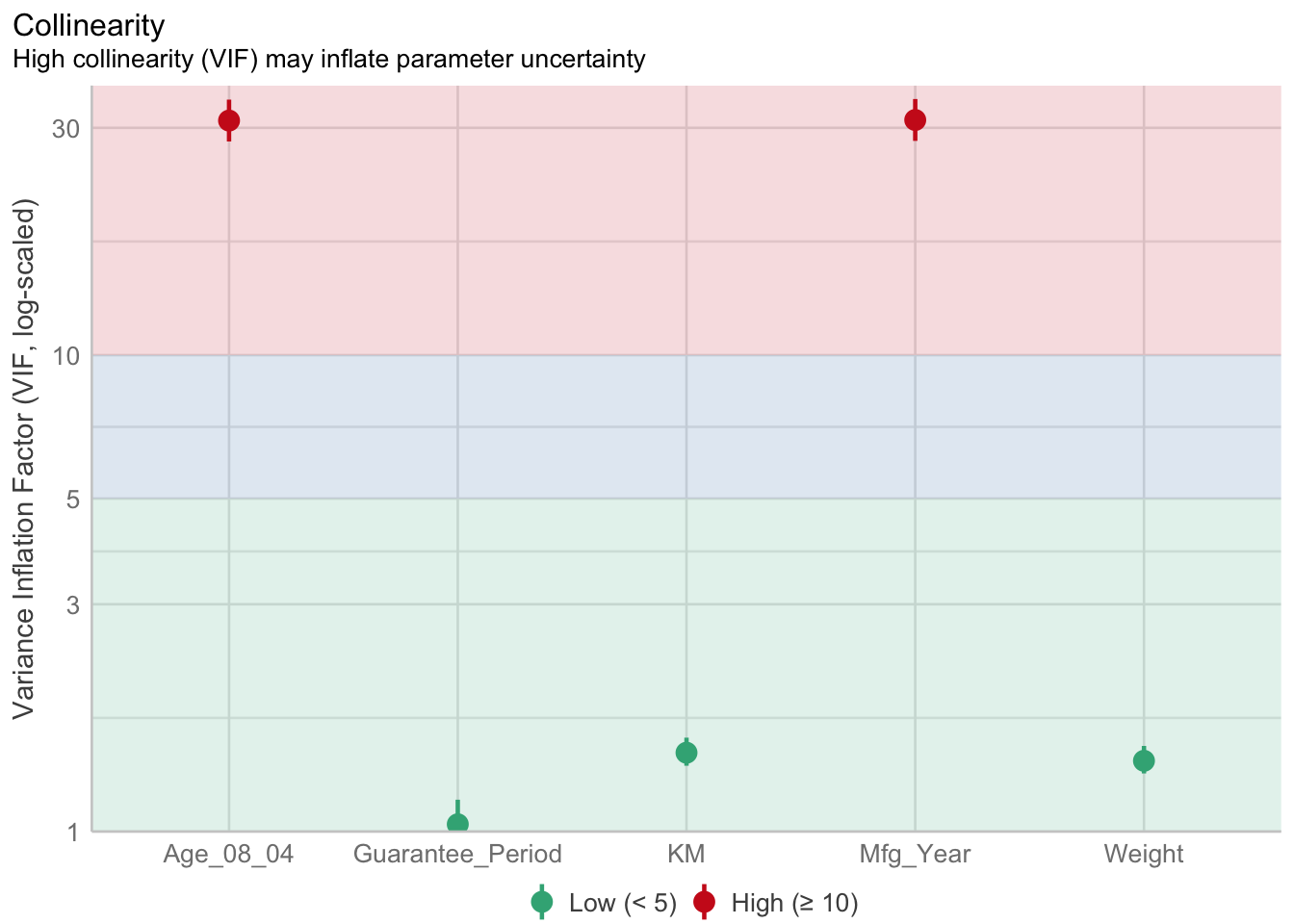

1.903e+01 2.770e+01 Model Diagnostic: checking for multicolinearity:

In the code chunk, check_collinearity() of performance package.

check_collinearity(model)# Check for Multicollinearity

Low Correlation

Term VIF VIF 95% CI Increased SE Tolerance Tolerance 95% CI

Guarantee_Period 1.04 [1.01, 1.17] 1.02 0.97 [0.86, 0.99]

Age_08_04 31.07 [28.08, 34.38] 5.57 0.03 [0.03, 0.04]

Mfg_Year 31.16 [28.16, 34.48] 5.58 0.03 [0.03, 0.04]

High Correlation

Term VIF VIF 95% CI Increased SE Tolerance Tolerance 95% CI

KM 1.46 [1.37, 1.57] 1.21 0.68 [0.64, 0.73]

Weight 1.41 [1.32, 1.51] 1.19 0.71 [0.66, 0.76]check_c <- check_collinearity(model)

plot(check_c)

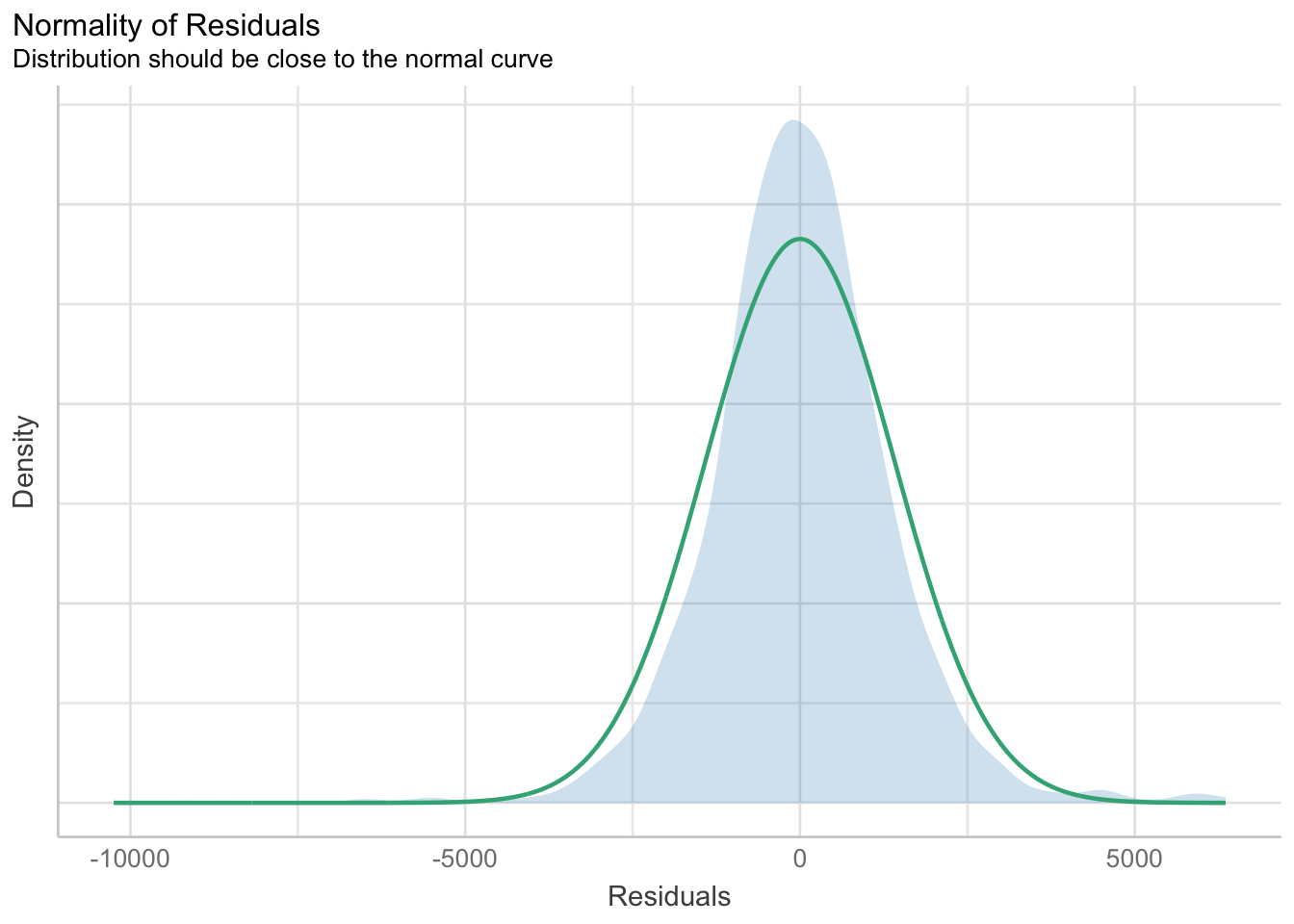

Model Diagnostic: checking normality assumption

In the code chunk, check_normality() of performance package.

model1 <- lm(Price ~ Age_08_04 + KM +

Weight + Guarantee_Period, data = car_resale)

check_n <- check_normality(model1)

plot(check_n)

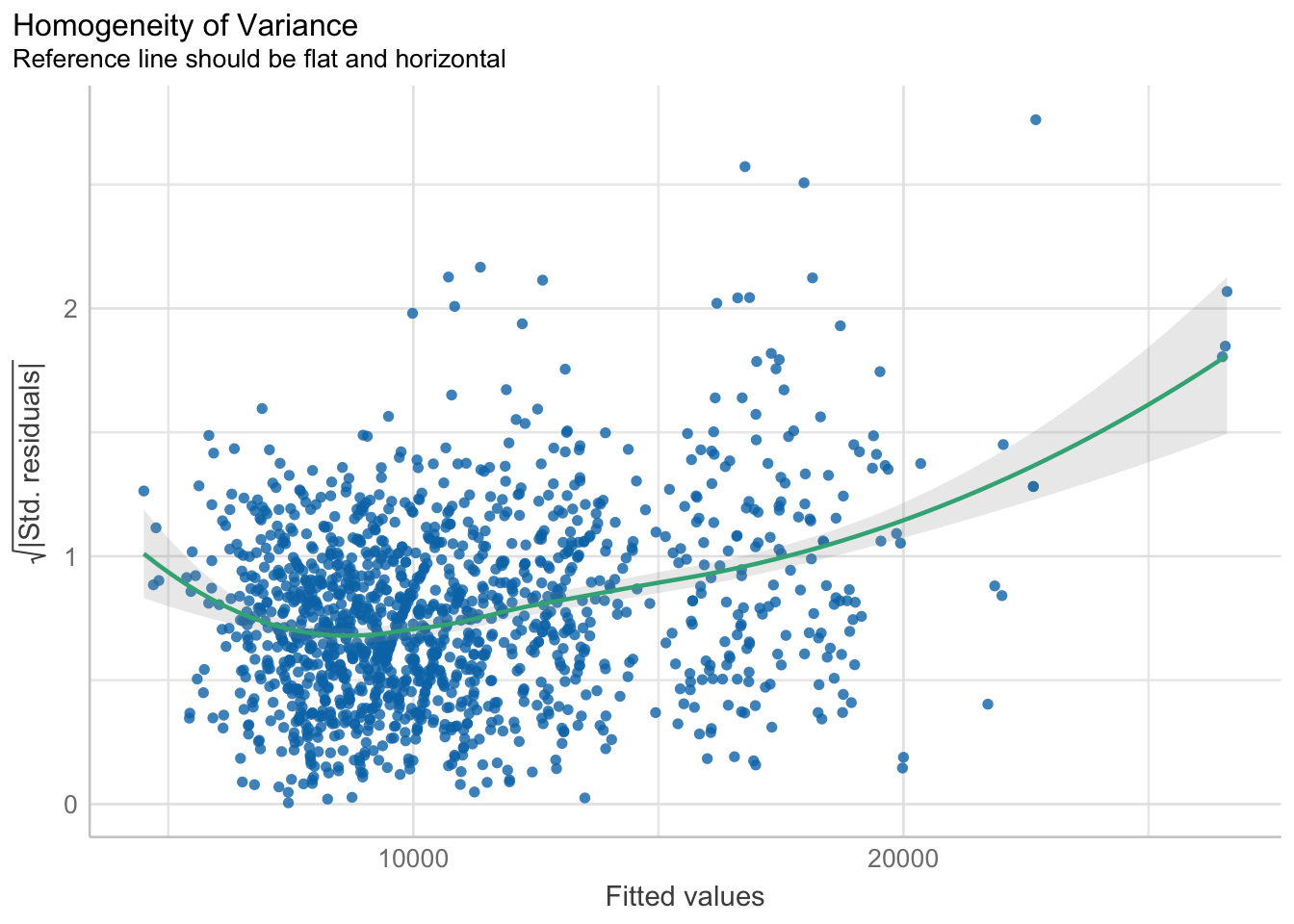

Model Diagnostic: Check model for homogeneity of variances

In the code chunk, check_heteroscedasticity() of performance package.

check_h <- check_heteroscedasticity(model1)

plot(check_h)

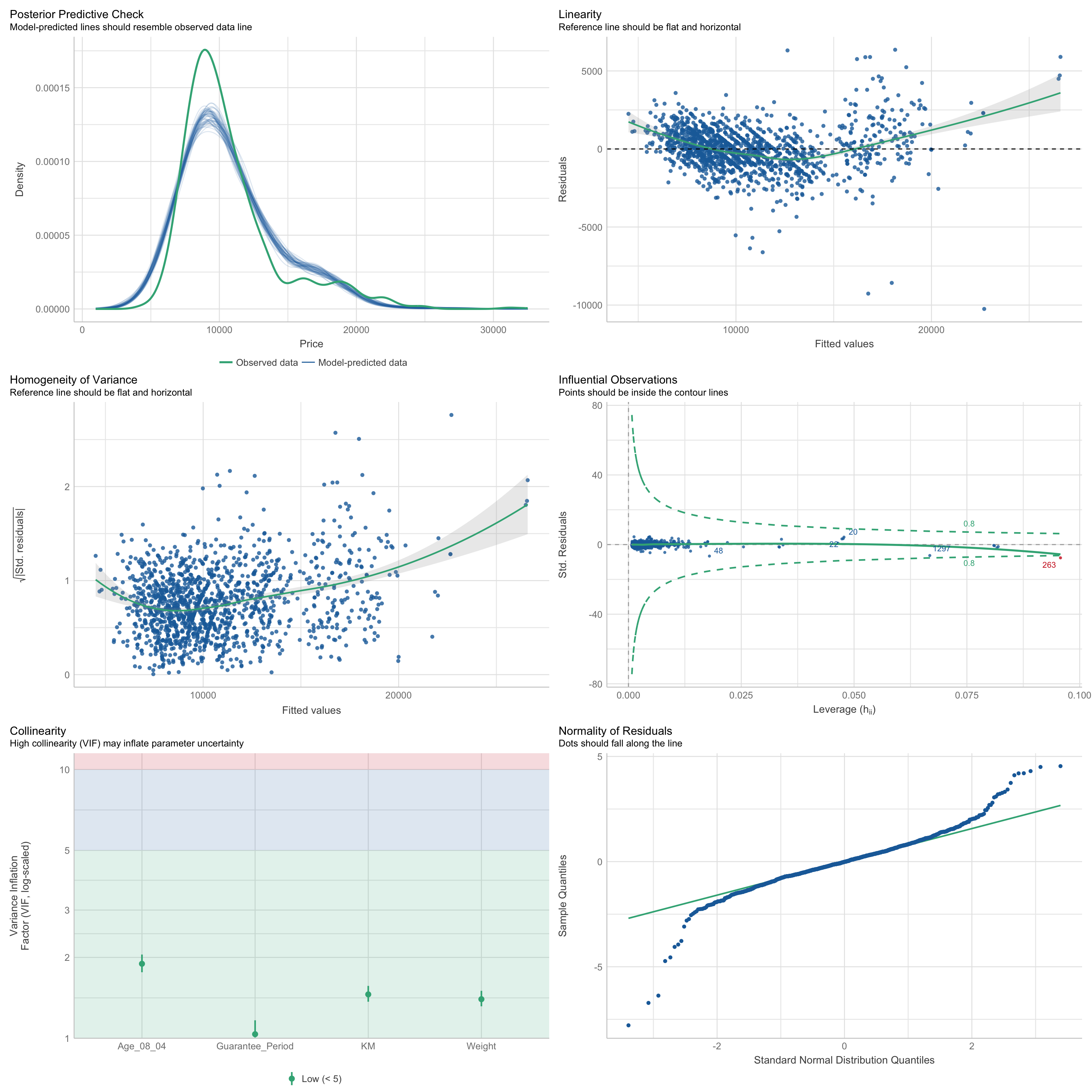

Model Diagnostic: Complete check

We can also perform the complete by using check_model().

check_model(model1)

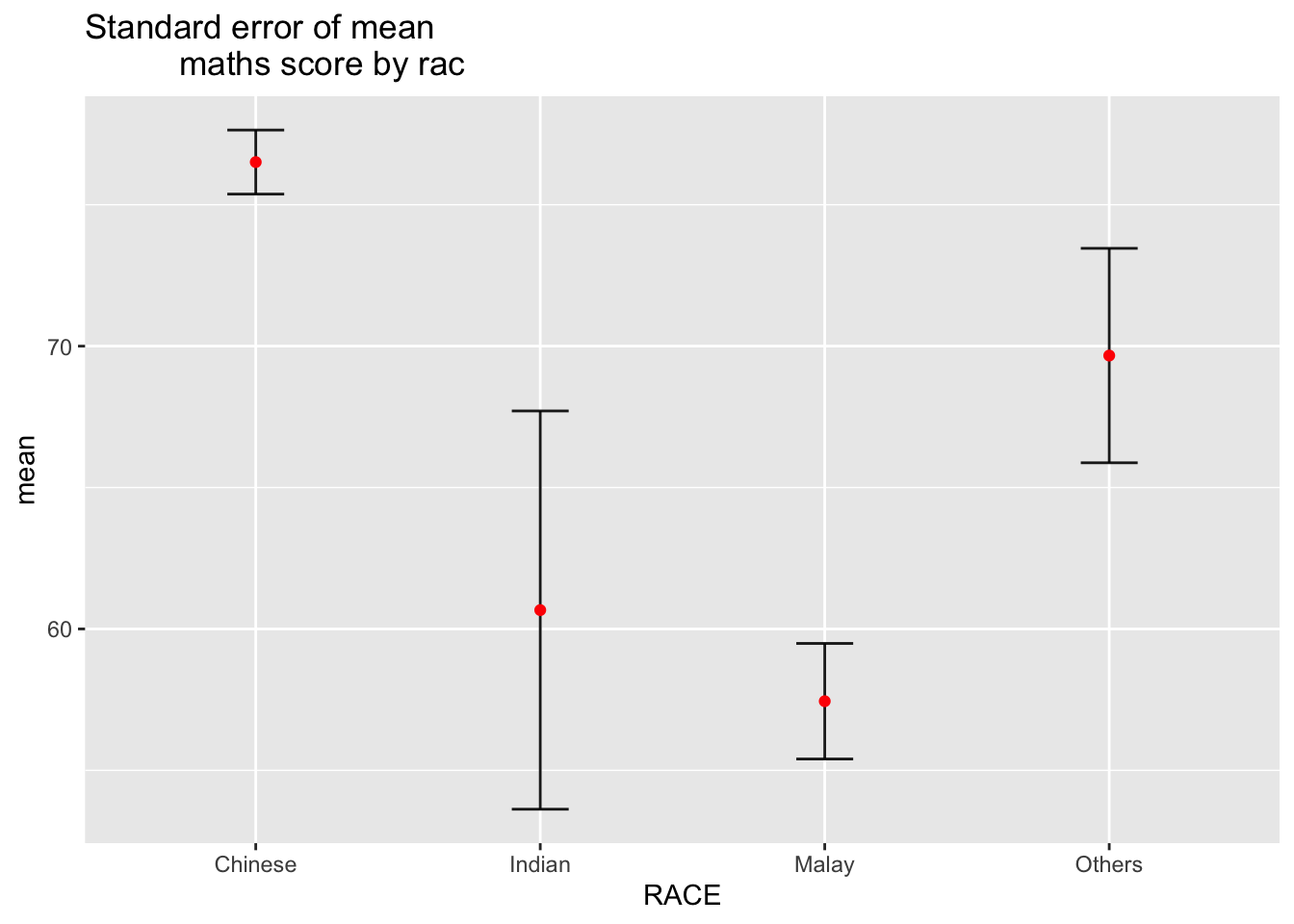

Visualizing the uncertainty of point estimates: ggplot2 methods

The code chunk below performs the followings:

group the observation by RACE,

computes the count of observations, mean, standard deviation and standard error of Maths by RACE, and

save the output as a tibble data table called

my_sum.

my_sum <- exam_data %>%

group_by(RACE) %>%

summarise(

n=n(),

mean=mean(MATHS),

sd=sd(MATHS)

) %>%

mutate(se=sd/sqrt(n-1))

knitr::kable(head(my_sum), format = 'html')| RACE | n | mean | sd | se |

|---|---|---|---|---|

| Chinese | 193 | 76.50777 | 15.69040 | 1.132357 |

| Indian | 12 | 60.66667 | 23.35237 | 7.041005 |

| Malay | 108 | 57.44444 | 21.13478 | 2.043177 |

| Others | 9 | 69.66667 | 10.72381 | 3.791438 |

ggplot(my_sum) +

geom_errorbar(

aes(x=RACE,

ymin=mean-se,

ymax=mean+se),

width=0.2,

colour="black",

alpha=0.9,

size=0.5) +

geom_point(aes

(x=RACE,

y=mean),

stat="identity",

color="red",

size = 1.5,

alpha=1) +

ggtitle("Standard error of mean

maths score by rac")

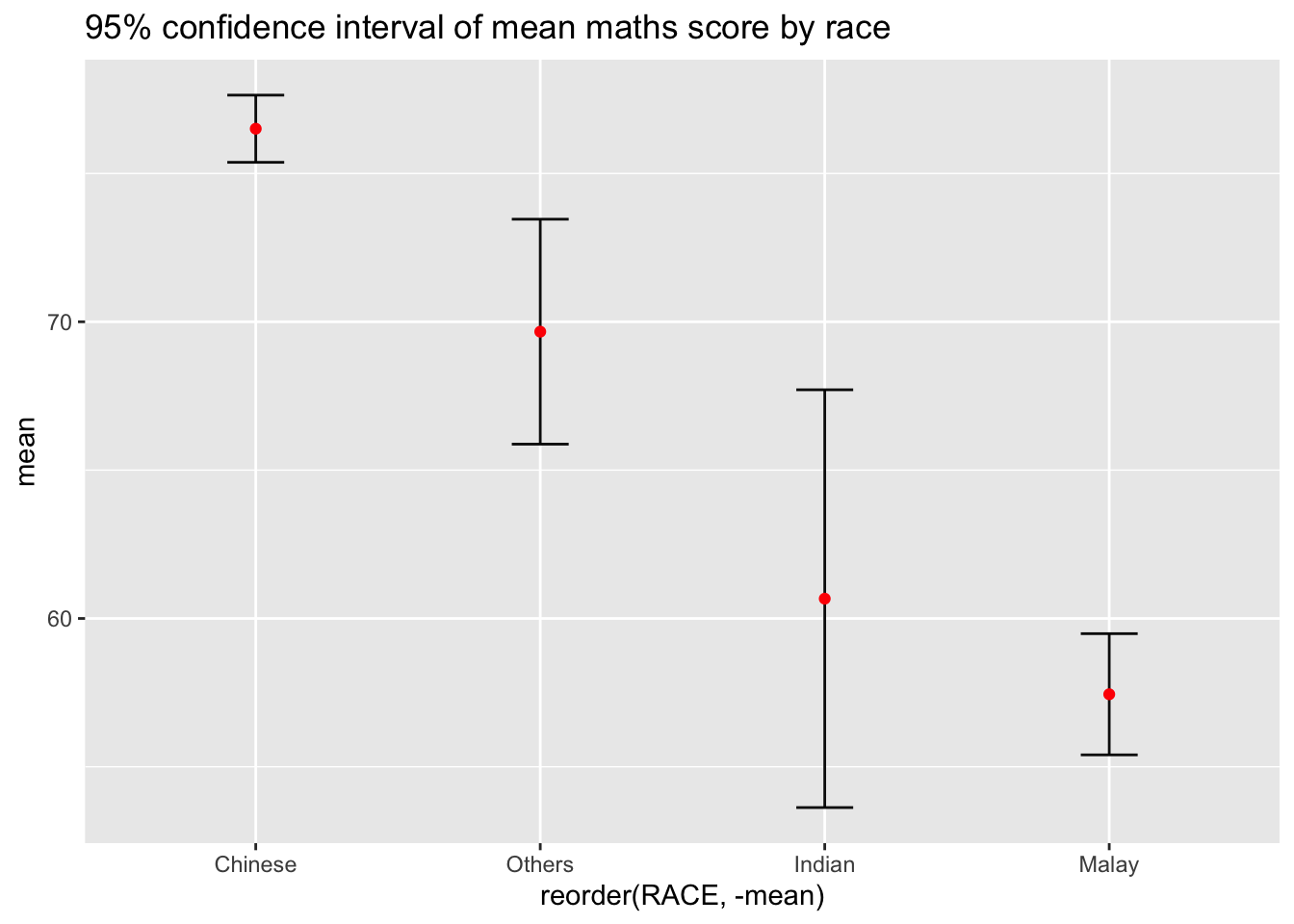

Visualizing the uncertainty of point estimates: ggplot2 methods

ggplot(my_sum) +

geom_errorbar(

aes(x=reorder(RACE,-mean),

ymin=mean-se,

ymax=mean+se),

width=0.2,

colour="black",

alpha=0.95,

size=0.5) +

geom_point(aes

(x=RACE,

y=mean),

stat="identity",

color="red",

size = 1.5,

alpha=1) +

ggtitle("95% confidence interval of mean maths score by race")

p <- ggplot(my_sum) +

geom_errorbar(

aes(x=reorder(RACE,-mean),

ymin=mean-se,

ymax=mean+se),

width=0.2,

colour="black",

alpha=0.99,

size=0.5) +

geom_point(aes

(x=RACE,

y=mean),

stat="identity",

color="red",

size = 1.5,

alpha=1) +

ggtitle("99% confidence interval of mean maths score by race")

pp <- highlight(ggplotly(p))

d <- highlight_key(my_sum)

crosstalk::bscols(pp,

DT::datatable(d))